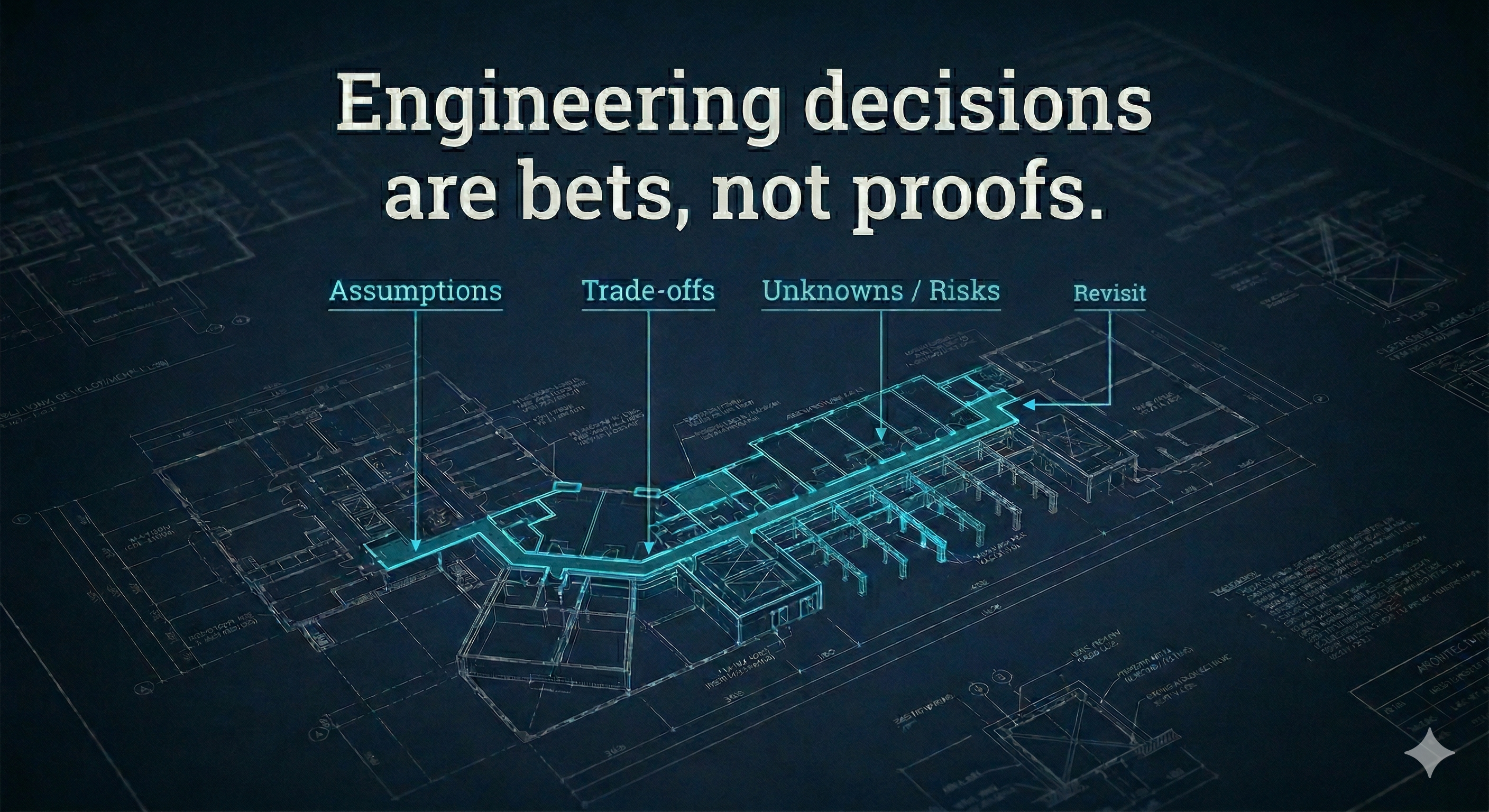

Engineering decisions are bets, not proofs

06 Apr 2026Most engineering decisions are not proofs.

They are bets.

You choose a direction with incomplete information, under time pressure, and with trade-offs you cannot fully test in advance.

That does not make the decision weak.

It makes it real.

In a previous post, I wrote about how design by committee leaves engineering change unfinished. The deeper reason is simple: many organisations treat technical decisions as though they should be certain before the work begins. But most meaningful engineering decisions are not certainties. They are bets.

Annie Duke puts this well in Thinking in Bets. Decision-making is more like poker than chess. In chess, everything is visible. In poker, you work with hidden information, incomplete knowledge, and luck. You can play the hand perfectly and still lose.

Engineering decisions work the same way. You choose a direction based on what you know now, not what you will know in six months. The quality of a decision is not whether it later turned out to be right. It is whether it was a good bet given the information available at the time.

The outcome trap

Teams often judge decisions by outcomes alone: good outcome, good decision; bad outcome, bad decision.

But that is too simple. A good decision can have a bad outcome. A bad decision can get lucky.

Norman Kerth put it well in his Prime Directive for retrospectives:

"Regardless of what we discover, we understand and truly believe that everyone did the best job they could, given what they knew at the time, their skills and abilities, the resources available, and the situation at hand."

If the team made a reasonable bet with the information they had, the fact that it did not work out does not mean the decision was wrong. It means the context changed, or something was unknowable.

I have seen teams adopt a framework that later proved the wrong fit, and conclude that the decision-making process itself was broken. But when you looked back at the information they had at the time, the decision was reasonable. What changed was the context. New requirements appeared. The team's needs shifted. The framework's maintainer abandoned it.

I have also seen teams make a questionable architectural choice that happened to work because the product never scaled past the point where the weakness mattered. That is not a good decision. That is luck.

If you only evaluate decisions by results, you learn the wrong lessons. You punish reasonable bets that did not pay off and reward reckless ones that happened to land. The right response is to update the bet, not to blame the people who made it.

What good bets look like in engineering

Why now?

Not every decision needs to be made upfront. We once delayed a data model decision on purpose because the first integration was still unclear. Two weeks later, the integration taught us what mattered and the choice became obvious.

When a decision does need to be made, there should be an honest answer to "why this, why now."

What are the trade-offs?

The useful conversation is usually about what gets worse, not what gets better. You can feel it when someone says, "This speeds up deploys, but on-call gets harder for teams without strong Kubernetes skills." That is a real trade-off.

How small can we make the bet?

Instead of migrating the whole platform, move one service first. You learn more from the first awkward deployment than from three meetings about what might happen.

How will we test it?

If you are betting that Kubernetes will simplify deployment, the first migrated service should tell you whether that is true. If you are betting on event-driven architecture, the first event contract usually exposes the integration pain you were hand-waving in diagrams.

What would make us change our mind?

This is where teams fool themselves. The useful question is, "What result would make us stop and rethink?" A short ADR helps when it captures what we believed and what would trigger a change, not when it tries to predict every edge case.

Is it reversible?

Not every decision can be reversed cheaply, but reversible bets should move with less ceremony. If a choice can be rolled back in a day, decide quickly and learn. If it locks you into years of tooling and contracts, slow down and pressure-test it harder.

Some teams formalise this by constraining time and scope. Basecamp's Shape Up process, for example, treats projects as bounded bets: decide how much time something is worth, shape the work to fit, and if it does not ship within six weeks it does not automatically get more time. The principle is simple. Smaller bets create faster learning.

The learning loop

At University Press, when we migrated services onto a new platform, we treated each service as a bet. We expected some assumptions to be wrong. The point was not to be right the first time. It was to make being wrong early cheap.

One team discovered that their networking assumptions were off. Another found that the deployment configuration needed to be more flexible than anyone had anticipated. Those were not failures. That was the loop working.

That is the learning loop: make the decision, observe what happened, separate what was skill from what was luck, and update your beliefs.

Most teams skip the middle step. They see a result and jump to "we were right" or "we were wrong" without asking how much came from the decision itself versus circumstances outside their control.

The lesson should not be "we should have known." It should be "we learned, and now we update."

Two heads are better than one, but not twenty

Getting a second perspective helps you spot blind spots, challenge assumptions, and test whether your reasoning holds up. Duke calls this the "buddy system" and I think she is right about it.

Two or three people pressure-testing a decision is valuable. But there is a threshold where adding more people stops improving the decision and starts slowing it down. That threshold is lower than most organisations think.

The best engineering decisions I have been part of involved a small group who understood the problem, had the relevant context, and were willing to disagree with each other. The worst involved large groups where nobody had enough context to push back meaningfully, so the discussion became about politics and risk aversion rather than trade-offs and evidence.

This is the same dynamic that turns ADRs into bureaucracy. The instinct is to involve more people to reduce risk. But past a certain point, involvement does not reduce risk. It diffuses ownership.

Pre-mortem and backcasting

In her book there are two techniques Duke discusses.

A pre-mortem starts with a simple question: imagine this decision failed. Why? This forces the team to think about risks they might otherwise dismiss because they are optimistic about the outcome. It is not about pessimism. It is about preparation.

Backcasting asks the opposite: imagine this decision succeeded. What did we do to get there? This helps identify the steps that actually matter and separates them from the busywork that feels productive but does not move the needle.

Both work backwards from reality rather than forwards from assumptions. They are also quick. A fifteen-minute pre-mortem before a significant decision is worth more than a two-hour planning meeting that tries to account for every scenario.

ADRs as bet records

If you think of decisions as bets, ADRs become something different. They are not proof that the team thought carefully. They are a record of the bet.

What did we believe at the time? What information did we have? What were the alternatives? What trade-offs did we accept? What confidence level did we have? And what would cause us to revisit this?

That is a useful document. It helps future teams understand not just what was decided, but the state of knowledge at the time. It makes it easier to judge whether the decision should be revisited because the context has changed, or whether the original reasoning still holds.

The key is brevity. A bet record should be short because the bet itself should be bounded. If the ADR is long, the decision is probably too big.

Show, don't tell

The best teams I have seen do not close a migration plan and declare certainty. They move one service, hit a rough edge, update the record, then move the second service with better knowledge.

You can watch the behaviour: an assumption breaks, the team rewrites the ADR while the details are fresh, guardrails get sharper, and the next team starts from a better place than the one before.

That is what good decision-making looks like in engineering. Not proving the future on paper, but learning faster than the uncertainty in front of you.