Why design by committee leaves engineering change unfinished

05 Apr 2026Engineering change often gets stuck for the same reason: design by committee. Not because anyone has bad intentions, but because the pursuit of alignment quietly replaces the pursuit of learning.

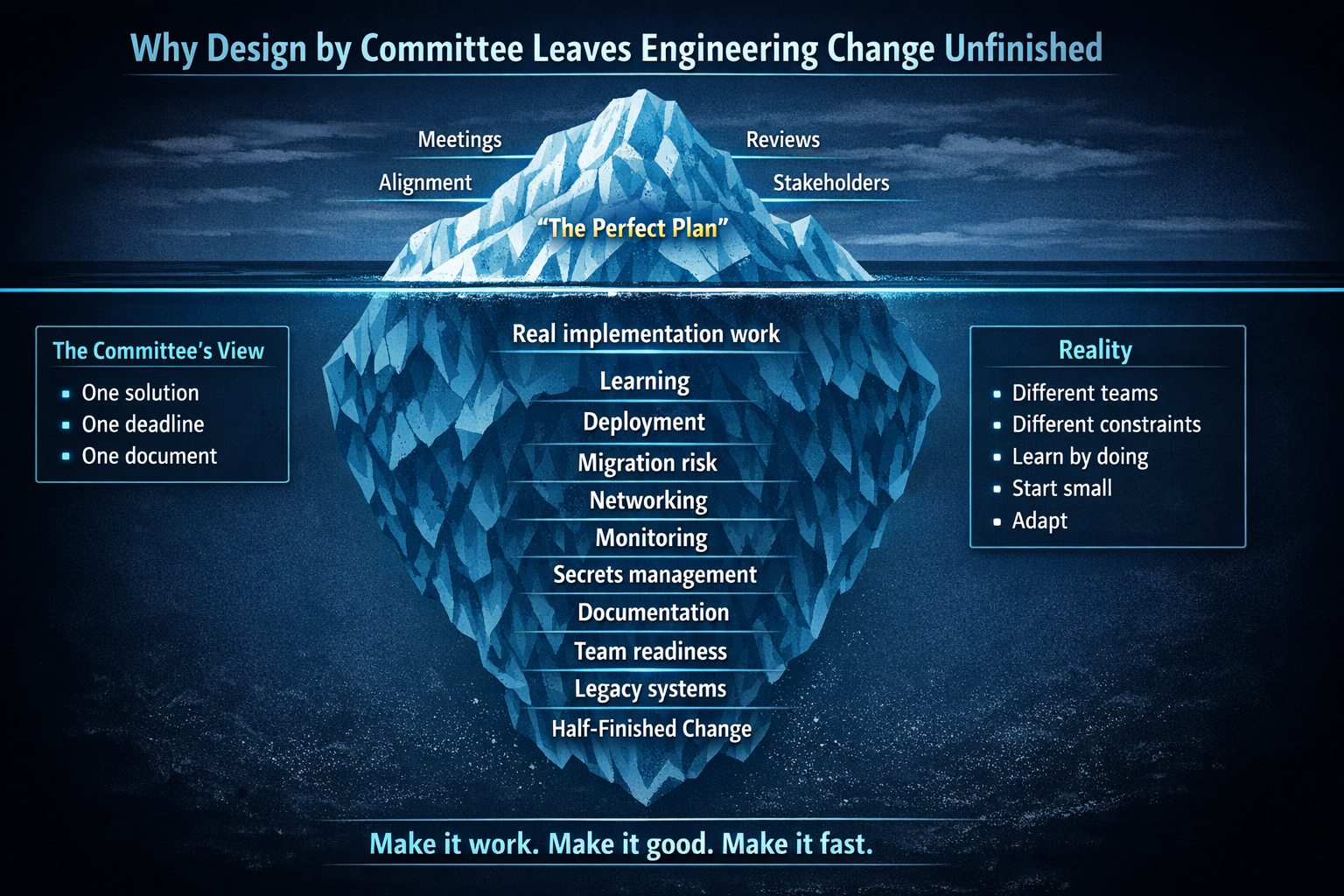

It usually starts with good intentions. People want alignment. They want consistency. They want to reduce risk. So a proposed change gets pulled into more meetings, more reviews, more stakeholders. Before long, the goal is no longer to try something, learn from it, and improve it. The goal becomes finding the one perfect solution that will work for every team.

That is usually where momentum dies.

Because in real engineering organisations, there rarely is one perfect solution. Teams have different systems, different risks, different constraints, different levels of readiness. The more you try to force one universal answer too early, the more abstract the decision becomes. It grows. It slows down. It tries to account for everything. And by the time anything finally lands, reality has already taught you things the original plan did not know.

That is not failure. That is normal.

The failure is building a process that treats learning as a problem instead of the whole point.

The biggest risk is not just slowness

When change is driven by committee, the biggest problem is often not that it takes too long. It is that it never really finishes.

Instead of delivering something small and useful, organisations create another half-complete initiative stranded in no man's land. The old way has already been disrupted. The new way is not fully in place. The documentation no longer matches reality. Teams are unclear on what they are supposed to follow. And the organisation is carrying the cost of both the old world and the new one without getting the full benefit of either.

That is how things get worse, not better.

You end up with yet another initiative that started with a grand ambition and ended with confusion and drift. Nobody can clearly say it succeeded, but everyone has to live with the mess it left behind.

To me, that is one of the strongest arguments against trying to solve too much upfront.

If you make the scope too broad and the coordination too heavy, you massively increase the chance that nothing lands cleanly. You do not just delay progress. You create unfinished change.

And unfinished change is expensive.

The silver bullet mindset

There is a belief that enough meetings and enough smart people in a room can design the final answer upfront. It sounds responsible. It often produces the opposite of progress.

A new platform, architecture, or engineering practice cannot be discussed into existence. The assumptions that look sensible in a slide deck are not the same ones that survive contact with a real codebase, a real deployment pipeline, or a real team under pressure.

That is why I keep coming back to a simple pattern:

Make it work. Make it good. Make it fast.

In that order.

Too many organisations invert that. They try to make it perfect before they have made it real.

ADRs are not the problem

I am not against ADRs. Martin Fowler's description of what they should be is close to what I actually want. Short. A page, maybe two. One decision per record. The context that led to it, the alternatives considered, the trade-offs, the consequences. Immutable once accepted. If the thinking changes, you write a new record that supersedes the old one.

Fowler also makes a point I think is underappreciated: the act of writing an ADR is often more valuable than the record itself. Writing a document of consequence forces you to say what you actually believe and why. In a group, that surfaces disagreements that might otherwise stay hidden until implementation. That clarifying effect is the real value.

If every team used ADRs the way Fowler describes, I would have nothing to write about. But they do not. My problem with ADRs has never been the format. It is what happens to them in practice.

Michael Nygard coined the term in 2011. His argument was simple: large documents are never kept up to date, nobody reads them, and without understanding the rationale behind a decision people either blindly accept it or blindly change it. Both are bad. His solution was a short text file, one or two pages, written as a conversation with a future developer. Context, decision, consequences. That is it.

Fifteen years on, teams still get this wrong in the same ways.

The failure modes are well documented by people like Zimmermann, Keeling, and others. Reviewing wording instead of decision quality. Skipping alternatives. Reopening accepted records instead of superseding them. ADRs becoming architecture bureaucracy. Records written long after the decision was forgotten, or written up after the fact to justify a choice already made in a hallway conversation.

I have seen most of these in person. ADRs that try to be mini design documents. ADRs so short they record the decision but not the reasoning. Records created after implementation rather than before. Documentation theatre, where the ADR exists to satisfy a process rather than support a decision.

ADRs absorb the habits of the organisation using them. When a company falls into design by committee, ADRs become one of the places that habit shows up. Instead of recording a decision, they become a vehicle for trying to eliminate uncertainty upfront. They stop being a note of what we believe now and start becoming an attempt to define the whole future before the work begins.

That is when they start to behave like waterfall.

A bloated ADR tries to solve the needs of many teams, in many contexts, all at once. It leaves little room to say, "This is our current bet, but what we learn in step one may change step three." It starts sounding less like a decision record and more like a contract with a future nobody fully understands yet.

A good record should follow the inverted pyramid: the decision and rationale first, details later. It should say what we think, why we think it, what constraints matter, and what we expect to learn. If there is supporting material, link to it. And when reality gives us better information, we write a new record that supersedes the old one. That way there is a clear log of decisions and how long each one governed the work.

I am not arguing against ADRs, and I am not arguing for chaos. Written decisions can be useful. The problem starts when teams treat them as a way to discover the final answer before they have learned enough to know it. In practice, most meaningful engineering change is iterative. You make a sensible bet, try it in a real context, learn from the result, and then refine the decision. The real question is not whether you wrote an ADR. It is whether the decision-making process helped you move, learn, and adapt.

Two migrations, two approaches

I have seen both sides of this.

At a previous company, the leader announced to the entire organisation that everything would be on Kubernetes within three months. The decision had the confidence and theatre of certainty, but not the evidence. I remember sketching the iceberg model to show how much of the real implementation work was still hidden beneath the surface. The three-month target was not a plan. It was a guess dressed up as a commitment.

Unsurprisingly, we missed the target. A follow-up message had to explain that it had not happened. You cannot commit to a deadline for something you do not yet understand.

At University Press, we approached a similar migration very differently. We moved services onto Kubernetes across multiple teams. We did not rely on formal ADRs or a large upfront design exercise. Instead, teams stayed aligned through regular cross-team conversations, a shared direction of travel, and a leaner approach to implementation. We did just enough to move forward, learned from the work as we went, and adapted based on reality.

I have written before about the importance of understanding the problem before changing the process. That is what we did here.

We started with one service. That gave us real information very quickly. We learned what deployment actually needed, what configuration mattered, what the rough edges were, and what flexibility teams would need in practice. From there, the ops people could define sensible constraints without pretending they should dictate every implementation detail for every team.

That effort succeeded not because we had the perfect document, but because we created the conditions to learn. One was decree first, learning later. The other was vision first, learning through execution.

Large-scale engineering change always contains unknown unknowns. Some things can be reasoned about upfront. Others only reveal themselves in delivery. That is why the goal should not be to eliminate uncertainty on paper, but to reduce it through small moves, fast feedback, and real learning.

One pattern does not mean one identical solution

Success does not mean every team ends up using the exact same solution in the exact same way. In fact, that is often a sign the standard is too rigid. A service with complex networking needs is not the same as a simpler internal application. A mature team with strong operational habits is not in the same place as a team still dealing with legacy systems. If you force both into exactly the same implementation, one of them will be carrying unnecessary pain.

So the goal should not be to impose one identical answer for everybody.

The goal should be to define the lines clearly enough that teams can colour within them, while still having the agency to choose what works best for their own problem.

That is not a failure of standardisation. It is a smarter form of it.

The platform team might define the shape of the contract: security expectations, deployment structure, required interfaces, observability standards, the mechanism by which a service is discovered and deployed. But within those boundaries, teams should have room to decide what makes sense for their service's lifecycle, networking needs, runtime, and operational realities.

For example, if an organisation adopts event driven architecture, it may matter that events follow the same naming conventions, versioning rules, and schema standards. It may matter that teams agree what guarantees exist around ordering, retries, and idempotency. But it does not necessarily matter whether one team uses Kafka and another uses RabbitMQ, if both satisfy the same contract.

Good standards define the boundaries that let teams work together. Bad standards try to make every team solve the problem in exactly the same way.

You want consistency where consistency is valuable, and flexibility where flexibility is necessary.

How you drive change determines what kind of team you get

When change is imposed through a committee mandate and a rigid document, teams disengage. They follow instructions. They implement what they are told. They have no ownership of the decision, no space to adapt it, and no reason to invest in whether it actually works in their context. You get compliance, not commitment.

When change is driven by people who genuinely believe in it, who go first, hit the hard edges, learn from it, and help others adopt it, you get something very different. You get people who can move the idea through reality. Not just enforce the process, but find the awkward operational details, discover where the original thinking was incomplete, and bring that learning back into the wider organisation.

One team may solve secrets management before anyone else. Another may discover issues with networking. Another may improve how deployment works. Those lessons go back into the shared guidance so the next team starts from a stronger position.

That is how organisations learn well. Not through one giant design produced centrally, but through smaller experiments feeding better knowledge back into the system.

Stop confusing alignment with progress

One reason design by committee is so seductive is that it looks productive.

There are meetings. There are diagrams. There are approvals. There is a document. It feels like progress because a lot of activity is happening.

But activity is not the same as learning, and it is not the same as delivery.

Sometimes all that has really happened is that the organisation has become better at talking about change than making it.

A finished small success is worth more than a grand cross-team programme that drifts into another unfinished initiative. One leaves you with evidence and something to build on. The other leaves you with partial adoption, confused teams, and stale documentation.

My view

As usual, the answer is balance. Doing nothing is one extreme. Decisions live in people's heads, get forgotten, and get relitigated six months later. That is how organisations lose institutional memory. Trying to design the final, absolute answer upfront is the other extreme. That is how organisations lose momentum.

Engineering change is not a finite problem you can solve on paper. It is an ongoing process of making bets, learning from them, and adjusting. Good organisations build that learning into the way they make decisions, not around it. The goal is not to be certain upfront. It is to make the best bet you can with the information you have, then update quickly as reality gives you better information. I wrote more about this in Engineering decisions are bets, not proofs.

ADRs are a tool. A good one, when used well. But no document can substitute for actually doing the work and learning from it.

I am not anti-ADR. I am anti-pretending that a document can replace learning. In engineering change, you usually understand more by getting something real into motion than by trying to agree the whole future in advance.

Start small. Make a bet. Try it in practice. Give teams guardrails, not handcuffs. Let them learn what works for their own context. Finish something real. Then spread what you learned.

That is how change becomes real.

Make it work. Make it good. Make it fast.